Deepfakes: Your Identity Systems Verify Credentials — Not People

These attacks exploit the boundary where technical IAM controls end and human judgment begins.

Deepfake Identity Attacks Are Already Happening

Source: Industry Study

.avif)

Source: Analyst Projection

Source: Analyst Projection

What IAM Leaders Need to Know

The identity infrastructure built to secure access verifies credentials, not the real human behind the interaction.

AI-generated voice and video can now impersonate legitimate users in real time. During support calls, onboarding, or approval workflows, identity is often assumed based on appearance, voice, or video presence rather than cryptographic proof.

When those interactions fail, the result is still an identity and access failure. Even if the vulnerability occurs outside IAM systems, IAM leaders remain responsible for preventing unauthorized access.

What Prepared IAM Programs Are Doing

- Deploy real-time deepfake detection to analyze voice and video interactions

- Strengthen biometric systems with liveness detection and anti-spoofing technology

- Transition to phishing-resistant authentication such as passkeys and FIDO2

- Require out-of-band verification for credential resets and privileged access changes

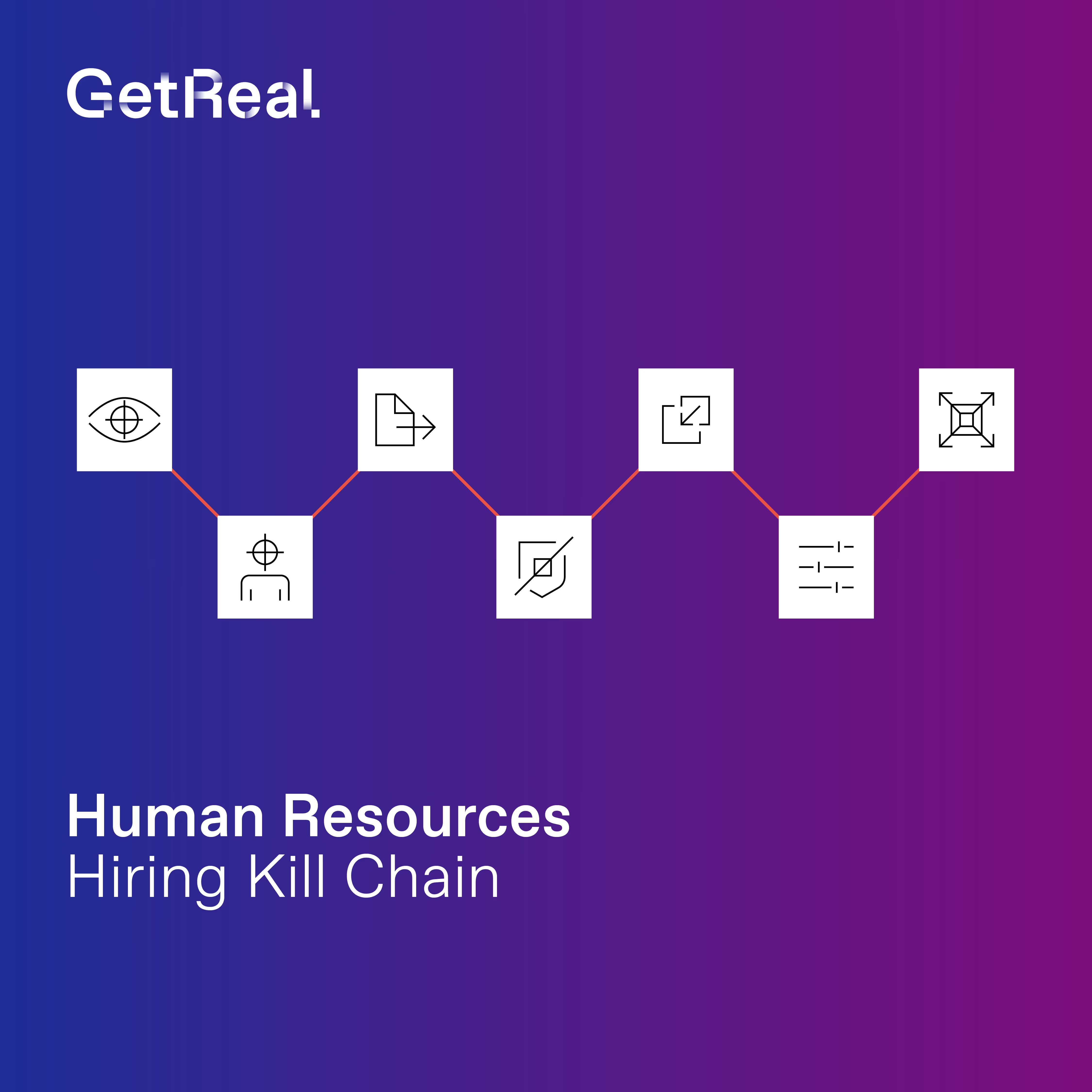

- Extend identity verification standards into HR onboarding and hiring workflows

Organizations should treat deepfake-enabled identity impersonation as a strategic IAM risk and strengthen identity verification across human workflows.

Learn more by downloading our detailed guide here